Case Study

TQS Integration uses QuestDB for industrial telemetry data

TQS Integration uses QuestDB as their time series database to store sensor data in the cloud infrastructure of modern pharmaceutical production processing facilities.

- Massively reduced costs

- Massively reduced database deployment and maintenance costs

- High throughput

- Reliably ingest hundreds of thousands of events per second

- Developer friendly

- Integrations with developer tools to easily insert and query data

- Avg ingested rows/sec

- 3M+

- Write speed vs InfluxDB

- 10x

- Compression ratio

- 6x

- Cloud up-time

- 99.99999%

Modern Industrial Analytics

TQS Integration

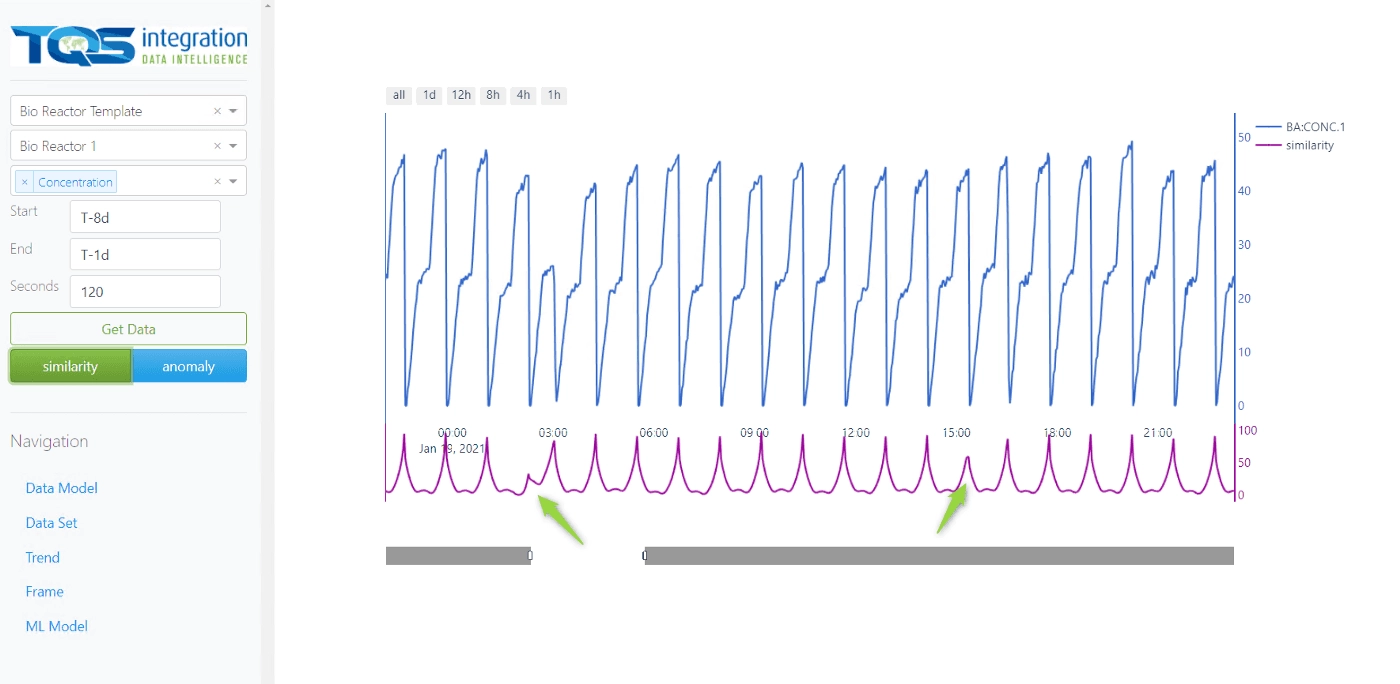

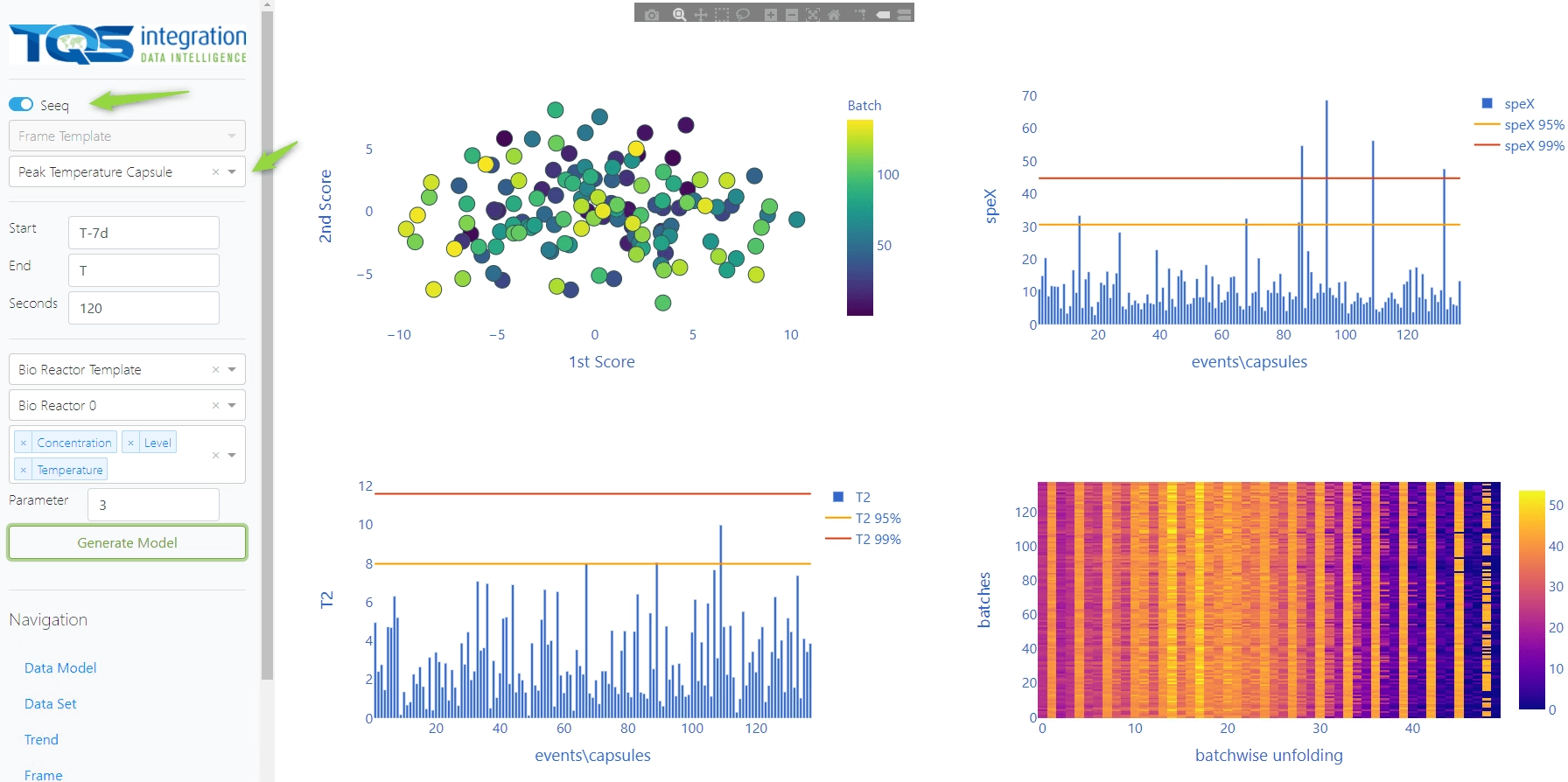

TQS Integration builds reference architecture for software applications dealing with industrial telemetry that produce and process hundreds of thousands of events per second. They trust QuestDB as their time series database for data visualization, real-time analytics, anomaly detection, and predictive maintenance.

- Predictive maintenance

- TQS Integration enables predictive maintenance through time-series analysis with QuestDB.

Industrial Telemetry

Powerful and Efficient Analytics

TQS Integration builds reference architecture for telemetry and relies on QuestDB.

- Real-time in no time

- Real-time dashboards require top performance data ingestion.

- SQL out!

- Query QuestDB via SQL with premium time-series extensions in real-time.

- Efficient architecture

- Massive throughput, minimal hardware. Grow slow, scale fast.

"TQS Integration uses QuestDB in data architecture solutions for clients in the Life Science, Pharmaceutical, Energy, and Renewables industries. We use QuestDB when we require a time series database that’s simple and efficient for data collection, contextualization, visualization, and analytics."

Advanced Analytics

TQS Integration relies on QuestDB

TQS Integration builds advanced analytics and data architecture for high-throughput telemetry. QuestDB provides a reliable foundation with simple and efficient data collection. Deduplication, out-of-order indexing, and efficiency even under heavy workloads. That's QuestDB.